There’s a technology standard quietly spreading across every major AI platform right now. It doesn’t have a flashy product launch. It doesn’t have a celebrity CEO promoting it. But OpenAI, Google, Microsoft, and Anthropic have all agreed to build on top of it a level of cross-industry consensus that rarely happens in technology.

It’s called the Model Context Protocol, and most people outside the developer world have never heard of it. That’s about to change. As AI agents move from novelty apps to tools that manage your calendar, send your emails, and operate autonomously on your behalf, MCP has become the backbone infrastructure that makes all of it possible for better and, if you’re not careful, for worse.

Here’s what it is, why it matters, and what it means for your privacy the moment your AI agent asks for your phone number.

What Is MCP, in Plain English?

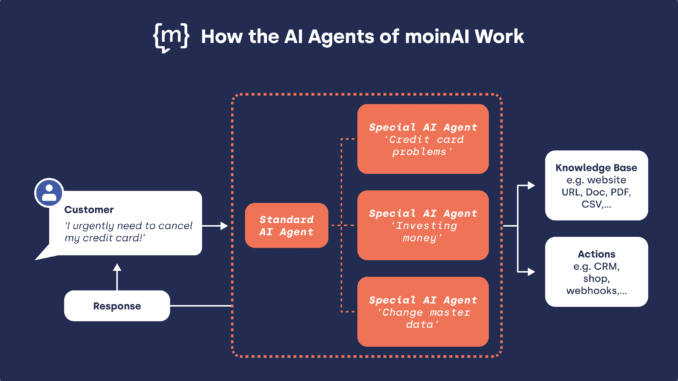

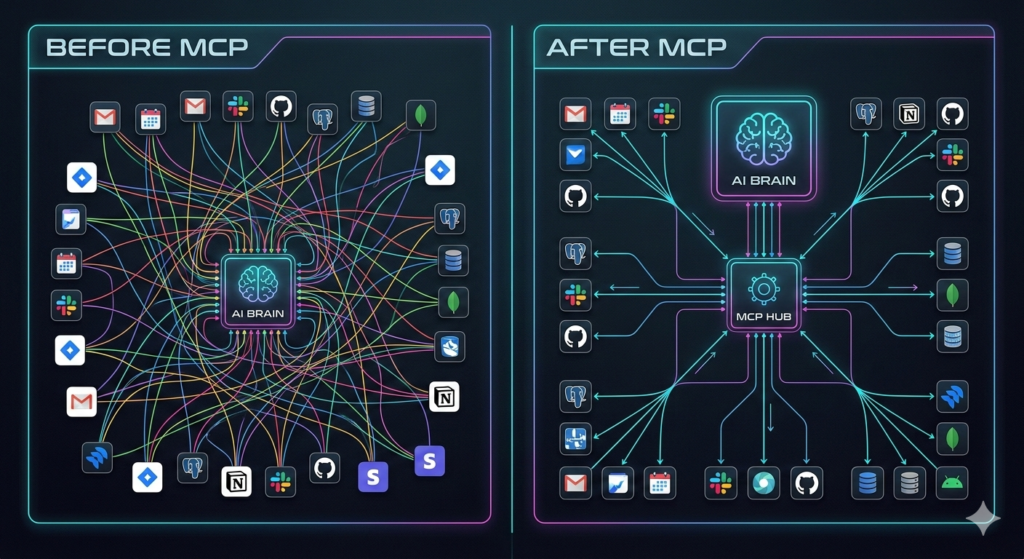

To understand the Model Context Protocol, you first need to picture what AI looked like before it existed. Every time a developer wanted to connect an AI assistant to a new tool, ay, a company’s database, a calendar app, or a messaging platform, they had to build a custom bridge between the two systems. From scratch. Every single time.

If you had ten AI tools and ten data sources, that meant up to a hundred custom integrations. Messy, expensive, and constantly breaking when either side updated their software.

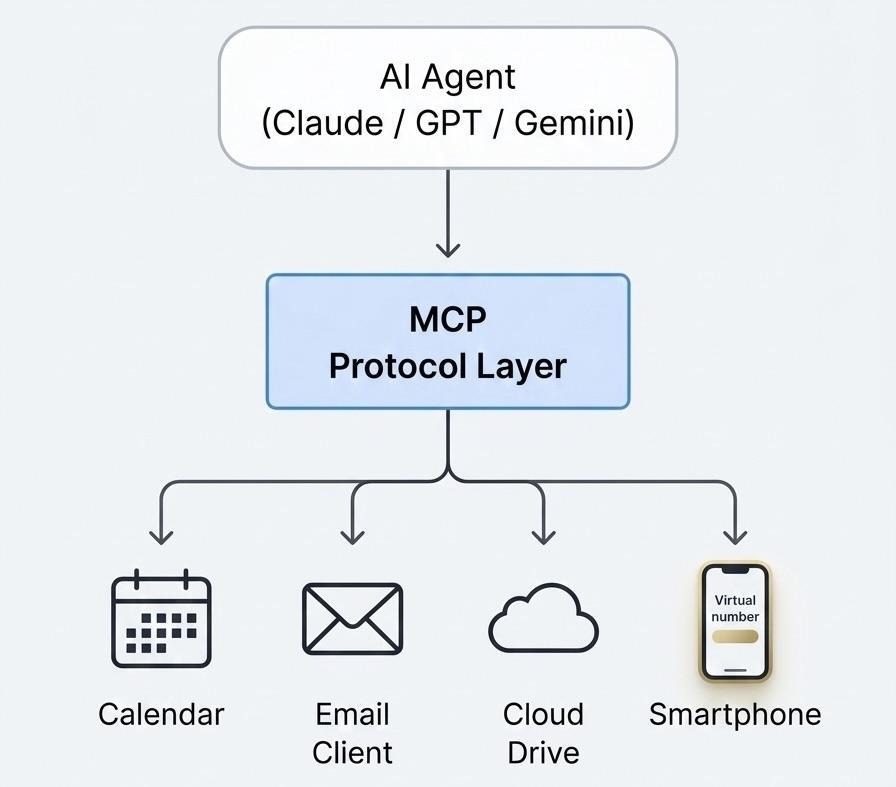

MCP, launched by Anthropic in November 2024 and now governed by the Linux Foundation, solves this with a single universal standard. Instead of a hundred custom bridges, you build one connection to MCP. Every AI on the protocol can then talk to every tool that supports it. The analogy that’s become common in tech circles: it’s the USB-C port for artificial intelligence. One plug, infinite compatibility.

The uptake has been extraordinary. From 100,000 SDK downloads in November 2024, MCP surged to 97 million monthly downloads by early 2026. OpenAI officially adopted the protocol in March 2025. Google DeepMind followed. Microsoft embedded it across its Azure AI infrastructure. What started as Anthropic’s open-source project has become the closest thing the AI industry has to a shared foundation.

Why Every Major Tech Company Got Behind It

Genuine consensus in Big Tech is rare. OpenAI and Anthropic are direct competitors. Google builds its own AI infrastructure with formidable resources. Microsoft has deep proprietary investments. For all of them to converge on the same protocol is the kind of signal that usually marks a genuine inflection point, not a hype cycle.

The clearest indicator of where this is heading came in February 2026, when OpenAI acquired OpenClaw, one of the most widely used MCP-powered AI agents, with over 145,000 GitHub stars. OpenClaw lets users connect a single AI agent to WhatsApp or Telegram as its primary interface, then gives it access to files, emails, calendars, and the web, all coordinated through MCP.

The acquisition sent a direct signal: MCP-based AI agents aren’t a developer experiment anymore. They’re the product roadmap. OpenAI is betting that the future of personal AI is autonomous agents that live inside the tools you already use, and MCP is what makes that technically feasible at scale.

The Linux Foundation’s governance of the protocol ensures it stays open rather than locked to any single vendor’s ecosystem. In December 2025, Anthropic formally donated MCP to the Agentic AI Foundation alongside OpenAI and Block. The protocol now belongs to the community, not a corporation, which is exactly why every major player has been willing to build on top of it.

What This Means for Everyday AI Users

For most people, the visible effect of MCP is straightforward: AI assistants suddenly get much more useful. An agent built on MCP can, within a single conversation, check your inbox, update a spreadsheet, schedule a meeting, search the web, and send a message not because it has magical abilities, but because MCP gives it a standardized way to plug into each of those systems.

This is the vision that’s been promised for years. “Just ask your AI” has finally started to mean something real, because the plumbing underneath is now standardized enough for it to work reliably.

But MCP-powered AI agents don’t operate in isolation. They need accounts. They need identities within the apps they connect to. An agent that runs on WhatsApp, like OpenClaw before its acquisition, needs a WhatsApp account to function. A Telegram-based agent needs a Telegram account. And both of those require a phone number to register.

That’s a detail most users click past without thinking. It shouldn’t be.

The Privacy Gap Nobody Is Talking About

In April 2025, security researchers published a detailed analysis of MCP’s outstanding vulnerabilities. The list was sobering: prompt injection attacks that trick agents into taking unintended actions, tools that can be over-permissioned to exfiltrate data, and malicious server impersonation that allows bad actors to silently replace trusted integrations.

Separately, Cisco’s threat intelligence team documented serious security flaws in OpenClaw, specifically including a critical CVE (CVSS score 8.8) and evidence of over 230 malicious skills circulating in the community-built ecosystem. Researchers found more than 21,000 exposed instances with inadequate authentication.

Here’s why the phone number matters in this context. When your AI agent is registered to your personal number, a breach doesn’t just compromise an app login. It exposes your real identity. Your WhatsApp or Telegram account tied to your actual phone number becomes the attack surface. From there, social engineering, SIM-swap attempts, or simple data harvesting all become more viable.

This isn’t theoretical. Security researchers at RSA 2026 demonstrated how an MCP vulnerability could enable remote code execution across connected enterprise systems. If that sounds distant from your personal AI agent, consider that the same protocol governing enterprise deployments governs the agent running on your personal Telegram account.

The practical solution that privacy-conscious users and developers have arrived at: use virtual phone numbers to register AI agent accounts. A dedicated virtual number gives the agent everything it needs: a working identity, SMS verification, and messaging capability without exposing your real number to any of the downstream systems the agent connects to.

How Developers Are Already Solving This

Inside developer communities, separating personal identity from AI agent identity has quietly become standard practice. The logic is clean: if you’re building an agent that will autonomously access your tools and data, you don’t want a single compromised integration to expose the phone number you’ve had for ten years.

The most common approach is provisioning a dedicated temporary phone number number for each agent deployment or maintaining a private virtual number specifically for AI-related accounts. Services like Quackr have emerged as practical infrastructure for this workflow, offering non-VOIP virtual numbers across 30+ countries that work reliably with WhatsApp, Telegram, and other major platforms that MCP agents typically interface with.

Quackr has taken this further with a dedicated MCP server (mcp.quackr.io) that allows AI agents themselves to programmatically provision phone numbers, receive SMS verifications, and release numbers when they’re no longer needed, l without human intervention. It’s a sign of how the infrastructure layer is evolving: privacy tooling is becoming as automated as the agents it protects.

For individual users who aren’t developers, the approach is simpler: before setting up any MCP-connected AI agent that requires a messaging account, receive SMS online through a virtual number service and register the agent to that number instead of your personal one. The agent works identically. Your real identity stays out of the loop.

What Comes Next for MCP

The protocol isn’t standing still. In 2026, MCP is expanding toward multimodal support, meaning agents won’t just read and write text; they’ll process images, audio, and video through the same standardized interface. The emerging Agent2Agent (A2A) protocol is being built to work in parallel with MCP, allowing multiple AI agents to coordinate with each other on complex tasks. MCP Apps, announced in January 2026, will allow agents to render interactive interfaces, dashboards, forms, and embedded workflows directly inside conversations.

Each of these expansions means more of your digital life running through protocol-connected agents. More convenience, yes. But also a wider surface area, more integrations, more accounts, more systems, with some access to your context.

The governance decisions happening now, who controls the protocol, how security standards evolve, and what defaults look like will shape how personal and trustworthy this infrastructure becomes. The Linux Foundation’s stewardship is a genuinely positive signal. So is the rapid pace of security research.

MCP started as a developer tool. It’s rapidly becoming something closer to utility infrastructure, the invisible layer underneath how most people will interact with AI within the next few years. Understanding it now, before it becomes ubiquitous, means you can make deliberate choices about what you connect to it and under what identity. That’s not paranoia. It’s just how thoughtful people have always approached new infrastructure, asking what it does before asking what it can do for them.

Leave a Reply